Rohan Pearce | Computerworld

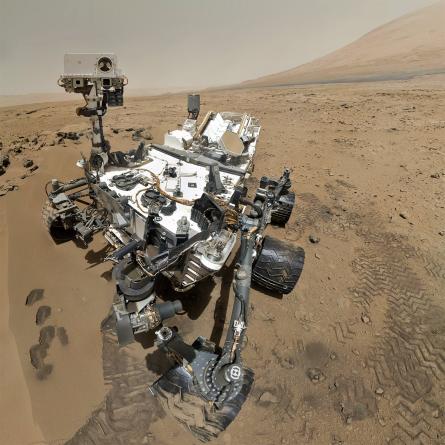

When NASA’s Curiosity rover landed on Mars at the end of its 563 million kilometre journey, it was a triumph for engineering. And it was also a triumph for IT.

The anxiety of watching the rover’s descent wasn’t confined to rocket scientists at the Jet Propulsion Laboratory; it was shared by NASA software engineer Khawaja Shams, a member of JPL’s Operations Planning Software Lab, who experienced what he describes as the toughest day in his career.

Shams has responsibility for the pipeline that makes sure that the data collected by Curiosity gets back to Earth where it can be used by scientists around the world. And in the lead-up to touchdown, he was responsible for building cloud architecture that could let millions of people round the world observe the historic landing, watching the data and images streamed back by Curiosity in real time.

Data gathered by Curiosity on Mars is sent to one of the orbiters around the planet then from there to the Deep Space Network: a collection of satellite antenna 70 metres in diameter situation around the world, spaced 120 degrees apart so that the DSN can see in any direction at any time. From there, data is sent to JPL.

Within JPL there are always-on nodes that pre-process the data and upload it to an S3 bucket on Amazon’s cloud. While the data is going into S3, NASA is provisioning EC2 nodes that get the data from Amazon’s storage service and process it, then re-store the results in S3. From there, scientists around the world can download the data directly.

On the day Curiosity touched down, the system succeeded magnificently by most measures. But getting there was a long journey, and it’s the story of how NASA moved from relying on what it could provide on-premise to using the cloud to do the heavy lifting. The implications go far beyond Curiosity: cloud computing is a key factor in letting NASA cope with the onslaught of data its missions, both in the Solar System and on Earth, are increasingly delivering to the world’s scientists.

But, years before Curiosity tweeted that it had landed safely on the fourth planet from the Sun, JPL’s cloud odyssey had a somewhat less auspicious start; at least from Sham’s perspective. And it began with procurement gone wrong.

32GB of what?

“In 2008,” Shams told Computerworld Australia, “I had to order a set of servers — actually for the Curiosity mission — and I started off by sending an email to my favourite IT person saying, ‘Hey I need these machines.’ And then I got an email back saying ‘Okay, how much RAM do you need?’ ‘Okay well here’s how much RAM I need.’ How much CPU do you need? How much hard drive do you need? What operating system?

“It was a lot of emails that transpired in this process and it turned out — I’d asked, I think for 32 gigs of RAM and they thought I needed 32 gigs of hard drive space and we got the wrong order.

“So talking to Tom [Sodastrom, NASA IT CTO at JPL] and Jim [Rinaldi], our CIO, we went over and we did a retrospective on what this procedure was like.”

JPL’s ‘Seven Minutes of Terror’ and the cloud

During the opening keynote at Amazon Web Services’ re:Invent conference in Las Vegas, Shams took to the stage to recount the toughest night of his career: The landing of NASA’s Curiosity rover on Mars, and his role in making sure it would be shared with the world in real time.

“I’m going to take us back today to the night of August 5th, 2012, when 350 million miles away the Curiosity Mars rover is about to complete its journey to the surface of Mars. Engineers at NASA have worked tirelessly for nearly a decade to make this day a possibility. They have executed countless simulations, tested every individual component. But the system as a whole is about to be tested for the first time.

“We can feel success on the horizon, we can taste the victory. But the smallest mistake can take it all away. Tonight, everything must be perfect. Millions of people are watching this around the world, relying on us to safely land Curiosity. We have deployed Web experiences and video streaming solutions on the cloud to share tonight with the whole world. Tonight, everything must be perfect.

“And tonight we either succeed remarkably or fail spectacularly. We eagerly look towards the sky with anticipation because we know that it all comes down to the next seven minutes: The Seven Minutes of Terror.

“Having developed the data processing pipeline for Curiosity, tonight is the toughest test of my career. As soon as Curiosity lands, bits will flow through my pipeline to be processed, stored then distributed as images to the mission operators and scientists, as well as the rest of the world.

“My heart is pounding as I steal a glance at the AWS health dashboard to ensure that all the services that Curiosity relies today on are up and running. EC2 — check. S3 — check. SWF — check. VPC, ELB, autoscaling, Route53, SimpleDB, RDS — check. CloudFront, CloudFormation, CloudWatch – check.

“It’s show time.

“The world watches on the video streaming solution deployed on the cloud. A solution that could withstand the failure of over a dozen data centres and still deliver the live video stream from JPL to you. A solution that could scale over a terabit per second, but one that only requires us to provision and pay for exactly as much capacity as we need. A solution that is possible today only due to the invention of cloud computing.

“The excitement builds around the world as Curiosity enters the Martian atmosphere and its temperature rapidly rises to over 1600 degrees. I take a glance out our CloudWatch console to realise that our streaming solution has gone up to over 40Gbps. I calmly launch another CloudFormation stack to increase our capacity and register it to a Route53 domain.

“Curiosity deploys its parachute and Mark II and our streaming solution exceeds 70Gbps. I calmly launch another CloudFormation stack. Curiosity jettisons its parachute and activates its jetpack as it approaches the surface.

“Back on earth we get a surprising call. The main JPL website, still running on traditional infrastructure, is crumbling under the crushing load of millions of excited users. We act quickly and route all JPL website traffic to the CloudFront-based Mars site. Our traffic exceeds over 100Gbps but the cloud hasn’t even started to sweat. No. The sweat is only on my forehead as I anxiously await the next bandwidth milestone so I can add more capacity.

“We are a scant 21 metres from the surface of Mars as Polyphony, our data processing pipeline, provisions EC2 in anticipation of the bits [of data] coming to Earth. The Sky Crane lowers the rover down to the surface of Mars. Curiosity has landed on Mars.

“The whole world celebrates with us and we are just as thrilled. We have made every JPLer, no every NASA employee, no every American proud. We have made humanity proud for we have landed a one ton mobile lab on the surface of Mars. Mission accomplished.

“Or is it?

“The landing engineers have been successful tonight, but my test has just begun. The bits flow from Curiosity to the Odyssey orbiter to the Deep Space Network and then finally into JPL. An intricate orchestration process co-ordinated by Simple Workflow magically causes nodes at JPL to upload these bits onto an S3 bucket. EC2 nodes pick up these bits and process them into images.

“These elastically provisioned EC2 nodes will process images rapidly and within seconds of bits arriving to Earth, the first Mars images will be on your iPad, Android and laptop screens around the world. On August 5th we had two successes: we landed Curiosity on Mars and we shared the first pictures from Mars with you in real time. You saw the first pictures from Mars at the same time that we did.

“Mission accomplished.”

It became one of JPL’s earliest discussions about cloud computing. “Jim Renaldi came up on the spot with the vision of—you, Khawaja should not have to buy a machine, you should have to rent them. And you should be able to get them on demand. That was the vision that he painted,” Shams says.

“So we will provision instead of purchase. And that was I think the key vision that enabled us to adopt cloud computing because that’s effectively what it allowed us to do.

“It allowed us to come in and instead of me saying, ‘Well I need these machines’ and then communicating with a bunch of different people down the pipeline and then waiting for the order to be shipped, and then waiting for somebody to install it physically, and then someone to install the operating system, only to find out it was wrong. We just come in and say, ‘Well I need five machines on the cloud with this image and if it’s wrong, well, okay, that’s fine let me. Just make two other clicks and correct that mistake. So that was one of the earliest conversations.”