Richard Nass| Designnews

The very early days of computers consisted of big mainframes in some back room somewhere with terminals connected to that mainframe. That era was ushered out by the PC/workstation revolution. When PCs became really popular, everyone wanted one on their desktops.

That allowed them to run applications locally, rather than on the server/mainframe. Employees were equipped with just the applications that were required for their jobs, and files could be maintained locally on the host computer (away from peering eyes).

Workstations were popular at the time too, as they were used for jobs that were too compute-intensive for a PC. The Sun/Sparc workstations dominated the engineering space. (Do you remember the Solaris operating system?) Sun was the darling of the workstation space, selling pizza-box-sized platforms that generally ranged from $10,000 to $100,000.

Little by little, the PC nibbled away at the low end of the workstation market, as the Intel (and AMD to some extent) microprocessors became more and more powerful. Eventually, the PCs reached par with the workstations, and the six-figure platform was replaced by something that cost around $5,000. Not only that, but the same box could run your word processor, your spreadsheet, and your email programs. They were generally connected to a server, but that was mostly for storage. The big engineering files needed more storage than was available on the PC, and they also needed to be accessed by multiple people.

Somewhere along the way, roughly around the early 1990s, a box was developed called an X Terminal. The X Terminal had a little bit of compute power, but relied on the server to handle the applications. This was a workable solution because the networking capabilities had advanced to the point where you could (sort-of) run the application on the server and have it run reasonably well. It was also a very cost-effective solution, and the X Terminals only cost about $1,000 each. The total cost of ownership, a popular term at the time, was far lower than putting a PC or workstation on every desk.

Unfortunately, the masses were already used to having their applications available on their desktops and felt that the X Terminals were a step backward. So there was somewhat of a rebellion. During this time, PCs dropped in price and storage was more plentiful, so the move to PCs was an obvious one.

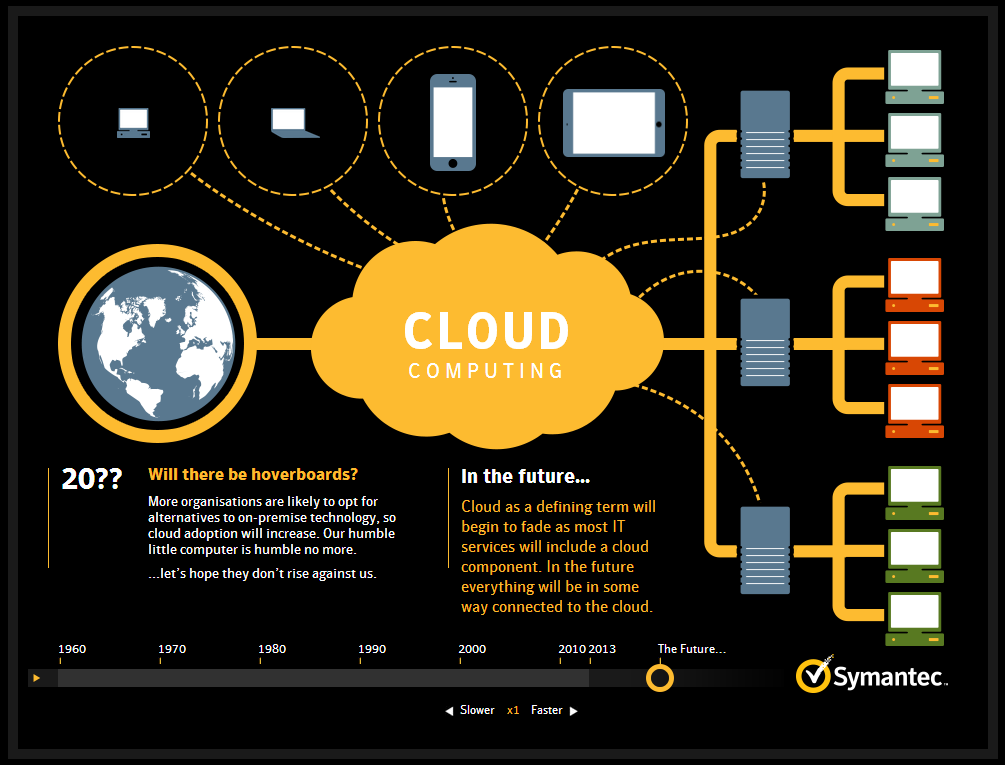

Fast forward to today, and there’s a trend that is gaining so much momentum it’s not likely to be held back — enter cloud computing. Proponents of cloud computing will tell you that the technology offers the best of both worlds: it provides the storage and ability to share files, yet lets users keep the PCs that they are loathe to give up.

Even in the engineering space, where file sizes can be quite large, the cloud model works. That’s because the connections between the servers and the PCs is relatively fast, and the servers offer pretty high-end graphics features. I recently witnessed a demo by the Nvidia folks, where they were manipulating some 3D CAD files based on Autodesk software in real time that were housed on servers in different parts of the world. You would not have known that the file wasn’t running locally on the PC.

The cloud-computing era is here. You can either embrace it or wait for the next wave of technology.